One AI Agent Handling Everything Is Not Efficient. It's a Single Point of Failure.

Most AI agent workflows hit a wall: tasks degrade mid-execution, contradictions creep in, mysterious failures are impossible to debug. The cause is context drift. The fix is subagent delegation.

There is a pattern most people hit around the same point in their AI agent journey.

The first few weeks feel like magic. You give your agent a task — research this, summarize that, draft a response — and it just does it. You start building real workflows. You run longer and longer tasks in a single session, keeping the context alive so you can refer back to earlier decisions.

And then, somewhere in the middle of a complex multi-step job, things get weird.

The agent references a constraint it invented. It redoes work it already did. It confidently produces output that contradicts something it decided 45 minutes ago. You re-read the conversation, squinting, trying to figure out where things went sideways. The task was not that hard. The model is clearly capable. So what happened?

Context drift happened. And the fix is not a better prompt.

What Context Drift Actually Is

A language model does not "remember" the way a database does. It attends to its context window — the full transcript of a conversation or task execution, loaded into memory on every step.

Early in a session, that window is clean. The model sees the task, its own reasoning, and the output it has produced so far. Every tool call adds output. Every decision adds reasoning. Every round-trip with a file read, a web search, an API call — it accumulates. By the time you are 60,000 tokens into a complex task, the things you established in the first 5,000 are fighting for attention with everything that came after them. And they lose.

Researchers at Anthropic have documented this as "lost-in-the-middle" behavior: models attend best to the beginning and end of their context, and progressively lose recall of content in the middle. Your early decisions, your agreed-upon approach, your established constraints — those end up in the middle by the time a long task is running. And they fade.

The result is context drift: an agent that started with a correct understanding of what it was supposed to do, and slowly developed a slightly different one without ever flagging the change. When you run long workflows in a single session — say, refactoring 40 files, or producing a complete piece of content from research to publication — the degradation is predictable. The session that felt coherent becomes the failure you cannot debug.

A Main Agent That Delegates

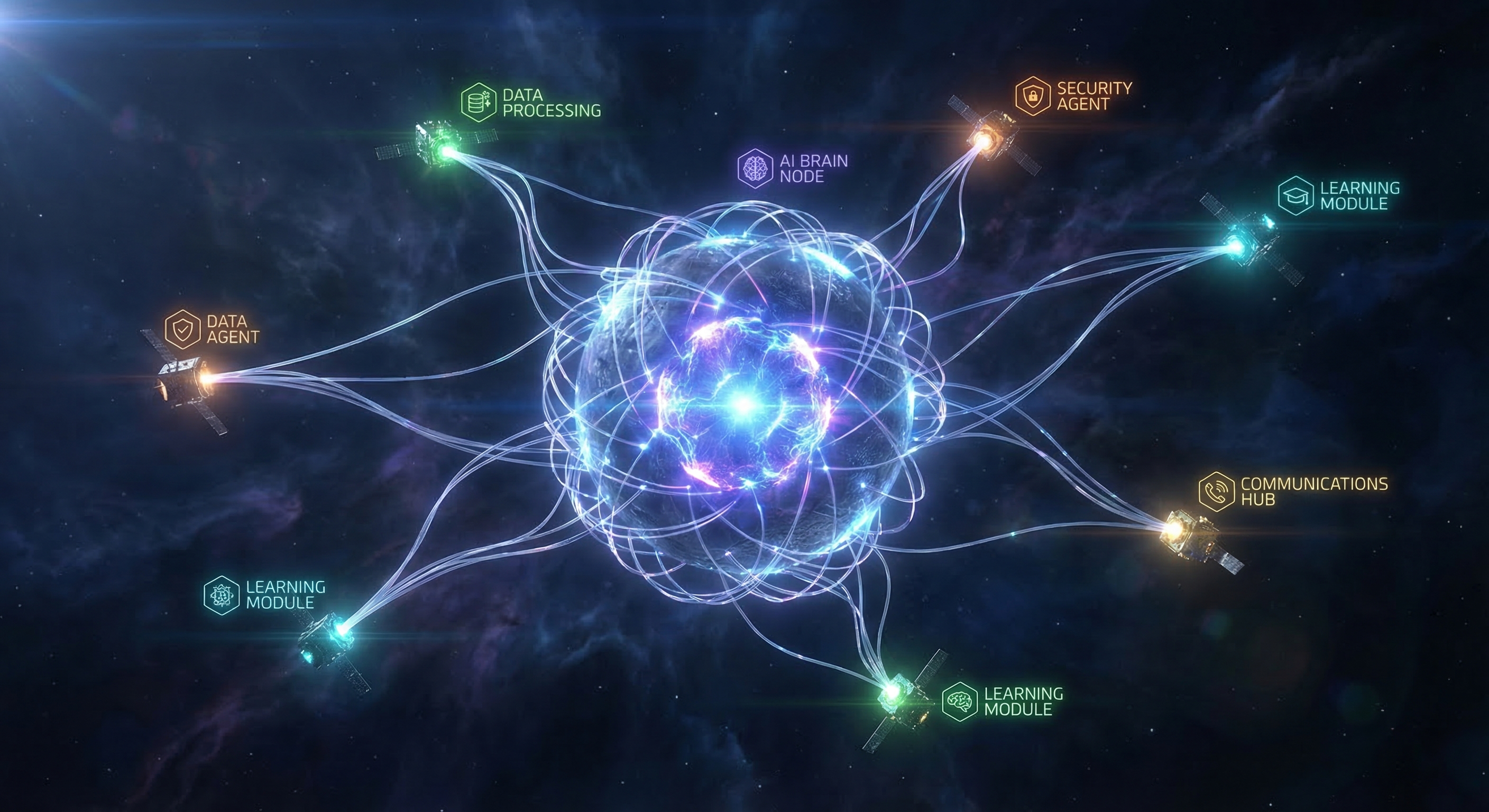

The subagent model inverts this.

Instead of one agent accumulating context for hours, you have a lightweight orchestrator — the main agent — that plans the work, then delegates specific pieces to isolated subagents. Each subagent gets exactly what it needs: a clear task, the relevant inputs, and a clean context window. It executes, produces output, and returns a summary to the main agent.

The main agent never drowns. Its context stays manageable because it is receiving summaries, not watching every tool call play out in its own session. The subagent stays sharp because it starts fresh and works on exactly one scoped job.

The cognitive load is distributed across purpose-built workers instead of concentrated in a single overloaded session. This is how every reliable distributed system is built. It just took a while to realize the same principle applies to AI agent architectures.

What This Looks Like in Practice

We run our content production pipeline this way.

A weekly blog post involves: web research, topic selection, hook generation, full draft, hero image generation, repository integration, PR creation, and a Slack notification — followed by a cron job that waits for the PR to merge before publishing the LinkedIn post.

In a single session, this would accumulate hundreds of tool calls, multiple file reads and writes, image generation outputs, and git operations — all in one growing context. By the time we get to PR creation, the agent has already seen enough noise to start making subtle errors: wrong branch names, mismatched file paths, off-by-one mistakes in blog metadata.

Instead, the main agent spawns a single subagent for the entire content task. That subagent runs in its own isolated session with a clean context window. It handles all the tool calls, all the intermediate state, every step from research to the final commit. If it hits 80% context utilization halfway through, that is the subagent's problem to manage — the main agent is not affected.

At completion, the main agent receives a clean summary: what was done, what PR was opened, where the files live. The main session context stays bounded. The work gets done without the drift.

The PR-waiting phase works differently. Polling GitHub every 30 minutes until a PR merges is not a job for a long-lived session — it is a job for an isolated cron task. The cron fires, checks one thing, takes one action, terminates. No accumulated state. No growing context. No drift.

The overhead of subagent setup is real but small. The reliability gain compounds over time.

Three Things That Shift When You Start Delegating

→ Long tasks stop degrading mid-execution. Each subagent runs with a focused context window scoped exactly to its job. The patterns established at the start of the task are still fresh when it finishes, because they are the only things in the window.

→ Failures become scoped and debuggable. When a subagent fails, the failure is contained. You get a clean error from an isolated session rather than a mysterious drift buried in a 200,000-token transcript. Finding what went wrong takes minutes, not hours.

→ Parallelization becomes possible. The architecture opens the door to running independent jobs concurrently — a monitoring task alongside a drafting task, for example. Steps that are not causally dependent on each other do not have to wait in a single-session queue. This is a design choice you can make as your workflows mature; sequential subagent delegation alone already delivers most of the reliability benefit.

The Honest Caveat

Subagent delegation is not free complexity.

You need to think carefully about what information each subagent actually needs. Over-specifying the handoff bloats the subagent's context before it even starts. Under-specifying it means the subagent makes assumptions that diverge from what the main agent intended.

The main agent also needs to interpret subagent results correctly. If it cannot tell whether the job was done properly, delegation just pushes the failure mode to a different layer.

The work required to make delegation reliable — clear task scoping, structured output formats, explicit success criteria — is real. But with subagents, isolation gives you a fallback even when the handoff is imperfect. With a single long-running session, there is no fallback. When drift happens, the session is already contaminated.

Higher setup cost. Dramatically higher reliability ceiling.

The Bottom Line

A single agent session is a shared resource. Everything — planning, execution, intermediate state — competes for the same context window. As tasks grow longer, that shared resource becomes a bottleneck and then a liability.

Subagents give each piece of work its own failure domain. The main agent stays light and authoritative. The workers stay focused. Failures stay scoped.

If you have been running complex multi-step tasks in a single session and hitting mysterious mid-task degradations — context drift is almost certainly the cause. The fix is not a better system prompt. It is a better architecture.

If you have built multi-agent workflows — either in OpenClaw or elsewhere — I would genuinely like to hear how you have structured the handoffs. What do you pass between agents? What format works for summaries? Drop a comment. The real lessons here are in the details.

Running this kind of automation yourself but spending too much time on infrastructure instead of the work? OctoClaw gives you a fully hosted OpenClaw instance in minutes — pre-configured, persistent, and ready to start delegating from day one.

Sources

- selfservicebi.co.uk — Subagents: How Delegating Work Solves the Context Window Problem

- morphllm.com — Codex vs Claude Code 2026: Subagents and context limits

- towardsdatascience.com — Claude Skills and Subagents: Escaping the Prompt Engineering Hamster Wheel

- arxiv.org — Building AI Coding Agents for the Terminal: Scaffolding, Harness, Context Engineering